Aug 2021

16 Mon

17 Tue

18 Wed

19 Thu

20 Fri 02:00 PM – 05:10 PM IST

21 Sat

22 Sun

Sreenath S Kamath

@sree92

Submitted Apr 9, 2021

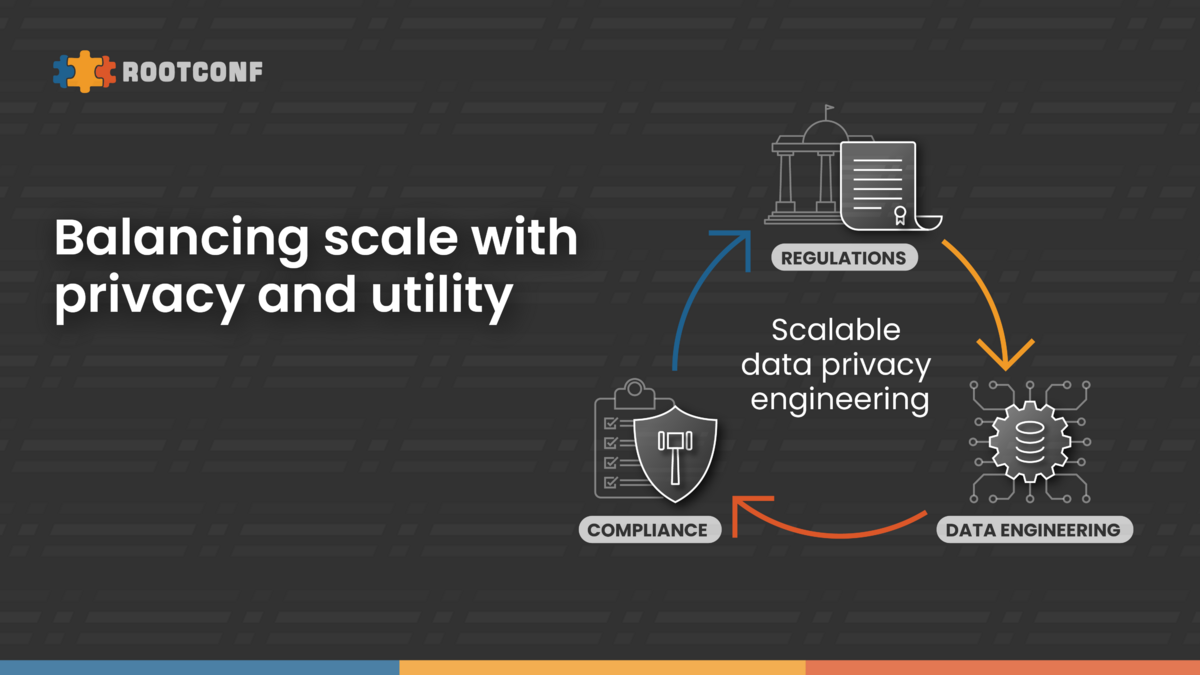

In a world where we collect as much user data to make their journey as personalized as possible, it’s also important to adhere to compliance requirements where a user can request both deletion (forget) or a dump (access) of their data. As a centralized data-platform team, this was a huge challenge for us at Disney + Hotstar. Owning the platform, while various teams owned the data meant that we had to provide a distributed way for every team to support data-subject requests while coordinating & orchestrating it altogether at the same time. In this talk, we discuss the various approaches we considered & discuss the architecture of handling data-subject requests that allows us to scale as more systems (as well as the data they use) grow within the ecosystem.

Hosted by

Supported by

Promoted

{{ gettext('Login to leave a comment') }}

{{ gettext('Post a comment…') }}{{ errorMsg }}

{{ gettext('No comments posted yet') }}