Over the course of my career I’ve gone through the many stages of grief; I’ve become angry at the poor quality of my data, I’ve attempted to bargain with engineering/PMs/etc for better data, and I became depressed over the issue. Now I’ve reached the final stage; I accept that my data is bad. Given that my data is bad, I then attempt to model it’s badness, and use that model to correct for the biases introduced.

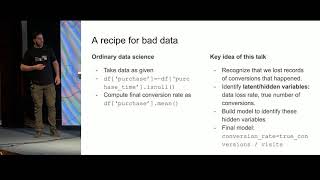

In this talk I’ll discuss how I approach bad data; I accept that I cannot fix it and instead try to model where it came from. This usually involves getting a more detailed grasp of the data generating process and writing down a formal model.

In many cases this enables me to use the data model to correct and enhance my predictive model, as well as provide useful measurements and insights for improving and repairing the data collection process.

This talk is about bad data, and how to deal with it. It is NOT about improving data collection, correcting broken data, monitoring, etc.

To start with I’ll discuss the problem of data that is collected incorrectly, and focus on a couple of examples:

- Data that is randomly missing, perhaps due to a malfunctioning tracking pixel.

- Data that is mislabelled, perhaps due to data collection partners who use slightly different processes to collect it.

I’ll discuss particular fixes for these, both to correct for biases introduced by incorrect data, and to understand how bad the data collection is.

Then I’ll move on to data which is fundamentally bad.

The first example I’ll cover is delayed reactions. When measuring ad clicks, the time between display and click is nearly instantaneous (minutes at most). When measuring clicks on links contained in an email, the time can be quite significant (days). The same is true for many relevant scenarios, including debt collection (e.g. at Simpl it takes 30 days to know if a user is delinquent).

I’ll discuss a technique for modeling and correcting for the bias introduced by delays, which comes by modeling the delay via survival models.

The second example I’ll cover is selection bias caused by using your model. In particular, I’ll show discuss why height appears to be uncorrelated with player performance in the National Basketball Association and why GRE does not seem to predict academic performance among students admitted to graduate school.

The conclusion I want everyone to take away from this is that bad data is not a show stopper. It’s also not something you’re helpless to do anything about. Rather, it’s an obstacle, but one that can be overcome (or at least mitigated) with careful modeling.

It would be useful to be familiar with Bayes rule and a bit of linear algebra.

Chris is currently the head of data science at Simpl, India’s top Pay Later platform. In past lives he’s been a physicist, a high frequency stock trader, an automated marketer, a bodyguard and a nootropic drug courier. He’s a strong believer in correct statistics, clean code, and putting skin in the game to demonstrate your beliefs.

https://www.chrisstucchio.com/pubs/slides/fifth_elephant_2019/bad_data.pdf

{{ gettext('Login to leave a comment') }}

{{ gettext('Post a comment…') }}{{ errorMsg }}

{{ gettext('No comments posted yet') }}